Scientific Process

TNU Guidelines

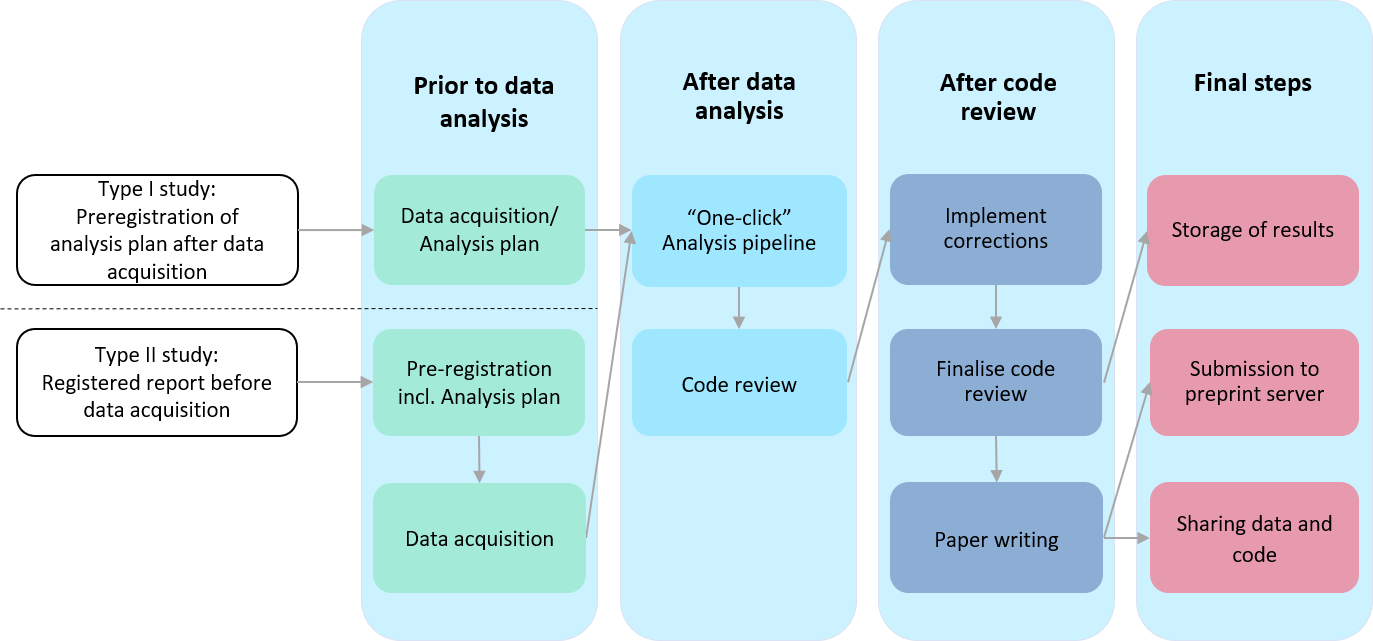

The following flowchart summarises the scientific process at the TNU:

Preregistration of analysis plans and registered reports

Prior to data analysis, an ex ante analysis plan is preregistered. This involves uploading the analysis plan to a repository where it gets time-stamped and is made publicly accessible when the study’s results are submitted for publication. Whenever possible under the specific project’s constraints, studies are submitted to journals as registered reports prior to data acquisition.

Code-review

If possible, a "one-click" analysis pipeline running all analyses from the raw data to the results figures is created.

Before submitting a paper for publication (and before submitting a revision), the analysis pipeline is checked by a second person who has not been involved in data analysis. This process has the following two general aims:

1. Re-run the entire analysis pipeline on a different system and check that the results are reproducible.

2. Perform sanity checks of the code and verify that there are no obvious flaws in the analysis stream.

After code-review, any necessary corrections are implemented and checked again by the code reviewer before the paper can be finalised and submitted to the co-authors.

Final steps

The results and analysis pipeline are documented and stored in line with our TNU-internal Working Instructions.

The written paper is submitted to a preprint server before it is submitted to a peer-reviewed journal.

Data sharing is done by default but follows specific regulations as described in our “SOP Data Sharing” and first needs to be internally approved.